Testing the Blind Interview Process for Internal Junior QA position

Let's look at how we streamlined the hiring process in our Software dept.!

This year we’ve done a bit of hiring in SparkFun’s Software department, and with each new hire we’ve really been focusing on dialing in our interview process. Earlier in the year we hired three developers at various skill levels and experience, so with each round of candidates we wanted to make sure we found the best fit for the department while making the interview experience as pleasant as possible.

We settled into the following format:

- Phone Screen: Allows us to learn about the candidate and for us to explain the position and introduce the company to the candidate to see if there’s a match.

- Code Repo: Allows us see some samples of the candidate’s code, how much or little is documented within the code, and helps us figure out the candidate’s skill level.

- Onsite Interview: General code questions; meet with the team; short, in-person coding exercises; and of course a tour of SparkFun’s headquarters.

Our next challenge was to hire a Junior QA Analyst internally. We decided to go internal-only on this position because:

- Current employees know how our internal tools work and have domain knowledge already.

- We wanted to create a gateway for more internal hires into our department, which has historically hired externally.

Like normal, we first defined job requirements and a job description, keeping in mind that we wanted this position to not require any coding or web development experience. At first we only received one applicant, but after a second reminder that the position required zero coding experience, we ended up receiving 12!

Since this was an internal-only posting, we each admitted that we had certain biases towards some candidates. Some of the candidates we’ve worked more with, some we haven’t. After a recent company Lunch & Learn on Inclusion and Diversity brought up blind interviews (see why blind interviews are a thing) as a way to combat our biases, we decided to try out our own blind section for this interview process. These are the core skills that the Junior QA Analyst needed:

- Clear, concise written communication

- Interest in learning coding and web development

- Interest in testing all the things

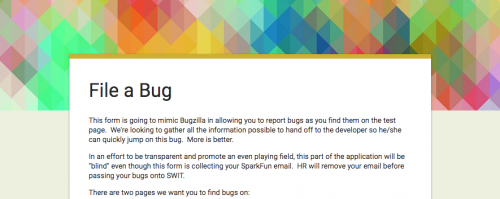

We also wanted to make the take-home test as work related as possible to give candidates a taste of what the job might be like. We planted several bugs (over 10) on two pages (main home page and a product page) of our public-facing site, and hosted it internally for the candidates to visit and then file “bug reports” via a Google Form. The form asked for a summary, description, steps to reproduce and “what do you think is wrong / how would you fix it?” questions.

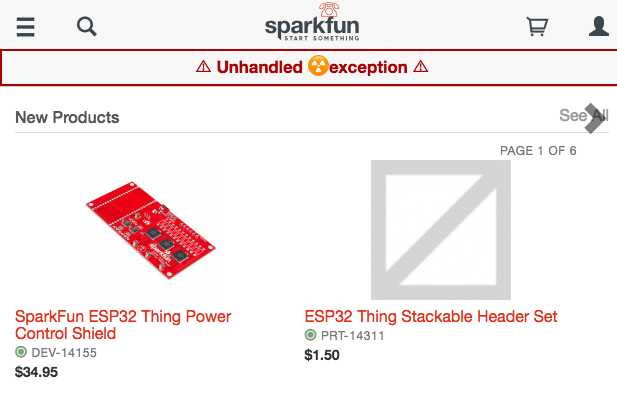

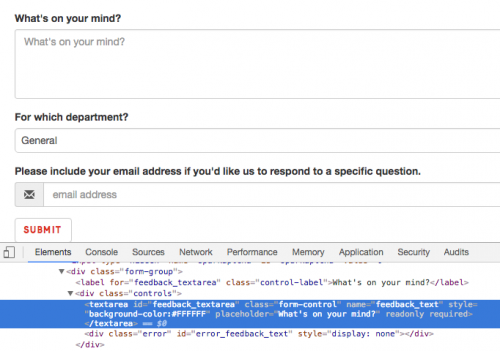

We planted a variety of bugs: broken links, fake image URLs, input fields set to readonly, different logos in different browsers, broken JavaScript, common typos/math errors and more. We wanted to see if candidates were checking various screen sizes, browsers and the JavaScript console. We also wanted to get an understanding for their current development skills if they have any, for example: Can they figure out that the link to open the modal is looking for “#shipping-restrictions,” but it is actually “#shipping_restrictions” so nothing happens when you click it? Most importantly, we wanted to see how well a candidate could describe a bug. Figuring out what went wrong was just gravy on top. We also asked the candidates to only spend 1-2 hours on it because we want to respect their time.

Here are a few screenshots of some bugs, since the site was only available internally:

First we met with everyone to do an in-person phone screen and go over the take-home test. Then the candidates were given nine days (spanning two weekends) to complete it. Once the Google Form closed, HR took the responses and swapped the candidate’s name for a numbered one, e.g. “Person 1.” We had 10 candidates complete the take-home with a total of 91 reported bugs!

As a team, we went through all the responses and ranked the “quality” of each bug report from one to five. There were some bugs that were invalid or duplicates, so those didn’t get any points. Some candidates found bugs that we didn’t plant and are valid in production, so of course they got points for those. Other baselines that we established:

- Not correct or not helpful

- Kinda on the right path, but not there

- Doing ok

- Good - found the bug, documented it well

- Great - found the bug, documented it well and had good ideas on how to fix or what was wrong.

We chose our top three based on their total score (sum of their quality scores) and the number of fives they had. Also conveniently there was a noticeable difference between the third- and fourth-ranked applicant, so having three as our final round really made the most sense.

After notifying our top three about the next step, which was a short, in-person interview, we looked at our fourth- and fifth-ranked individuals who had done really well, but just didn’t quite compare to the top three. We realized that this grading system was biased toward those with previous development experience, but we figured that we would be able to get someone more experienced up to speed faster. Next time we open up this type of position, we may want to ensure that previous dev experience doesn’t have as much of an advantage as it did this time.

Since this was an internal-only position, we had the opportunity and felt that it was important to provide feedback to all the candidates. Our QA Engineer met with each one to discuss what they did well, what they should work on and what resources to look into.

What we really liked about making the test blind was that we only looked at what the candidate provided. We didn’t look up their past bug reports or past performances. If this had been an external candidate, we wouldn’t have known those either. We didn’t give someone that we enjoy working with higher scores. We came to a consensus much faster for our top three than we would have if we didn’t have these objective standards. We were also hoping that the candidates enjoyed the experience too, and the feedback has been positive! Our top candidate feels that they earned the new position on merit rather than biases or office politics, and hopes this type of blind exercise shows up in other job openings. Others believe that it helped to put everyone on the same playing field… which was exactly our goal of the exercise!

We’re looking forward to including more exercises like this for each open position when possible. We feel that the anonymous nature of the “blind” test helped us focus on picking the person with the best skills for the job in a timely fashion, and the candidates felt that they were being compared evenly. Win-win!