A little over a year ago, Nate introduced you to Data. Data may still be in beta (Gmail was in beta for over five years), but that doesn’t mean we don’t need a terms of service.

The terms of service codify the ground rules of data.sparkfun.com. SparkFun wants to be transparent, which is why our official TOS, while still having ‘prevent the lawyers from freaking out’ legalize, has the nice ‘basically’ section. Clear, open, honest, transparent.

Visualization of the SparkFun bee hive powered by Data! This is them leaving in the morning

So the rules of the road, as they are now and how they’ll exist moving forward, are:

- Users can create public or private streams of data.

- All public streams are listed on data.sparkfun.com. Private streams are excluded from the lists.

- Private streams are still visible if a user knows the public key.

- Stream management is only possible with a stream's private key.

- Streams are limited to 100 updates every 15 minutes (that works out to one per nine seconds).

- Streams are limited to 50 megabytes of data.

- Data over 50 megabytes will be aged off.

- We will not sell your data. We do not collect any personally-identifiable information.

- We keep standard http access logs of the service, which include the IP address of the requests - these logs are kept for 90 days.

- We do not guarantee your data gets to us correctly.

- We do not guarantee we won’t lose your data; we don’t expect to, but things happen.

- We make no guarantee of up time. We want it to be up, but again, things happen.

- We will remove data from streams after 120 days of inactivity.

The big change is that last bullet. If your stream goes inactive we want the ability to remove the data. One of the causes of our last Data outage was older data that was probably not being used anymore sitting on our servers. We ran an analysis and pushing out old data saves us a lot of space.

Our goal isn’t to stop your ability to push data to a stream and it isn’t to get rid of data that you are using. We needed a benchmark for removability; we decided 120 days of inactivity was a reasonable bar.

This will take effect in the next couple of weeks. This is our first announcement and we’ll work on adding the official terms of service to Data, much like we did on SparkFun. Then we’ll get to the point where we remove data.

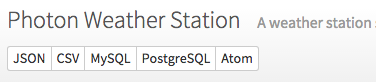

Download your data at any time

Remember, at any time you can download your data from the site. We make it available in five different download options. Hopefully one of those works well for you.

That Beehive project looks interesting. Has that been written up on the site yet? Specifically what are you using for that visualization? That's much nicer than the JavaScript mess I have going.

Hi Eric, the tool used to create the chart is called analog.io. More info here: https://hackaday.io/project/4648-analogio-a-full-stack-iot-platform

The changes seem very reasonable. The Wolfram Data Drop provides only thirty day retention for the "Starter Level" (free) data bins. So this is way more favorable. Thanks for creating and maintaining this service - It's Awesome! I

Ruh roh... Looks like I better freshen-up what's more-or-less a static stream...

Question: Does "inactivity" include read access, or only writes/updates?

This is a great question. Right now we have only defined it as writes. This is why we allow the data to be downloaded. I've never thought of it as a static data hosting service. I'm glad this conversation is happen. This is what we need if we want to ensure that our users are getting what they need.

I have a stream with all of two entries that's used for update checks by programs I wrote. They'll check in with that stream to see what the current version is, and which stream they should use to pull an update. (I also wrote a utility that will encrypt a binary file, convert it to ASCII via Base64, split it into smaller chunks, and then upload it to a Phant server. Pretty nifty way of distributing updates, and it'll lend itself nicely to block-level update downloads one I get a round tuit.)

Thanks for offering this! I hope my purchases of Electric Imps and sensors contributes to keeping this option available.

Keep buying cool IoT gear and we'll keep making it!