So, here’s a thing. It’s a sweeping generalization on my part, but please indulge me for a bit. In our line of work, I tend to put people into one of three groups: you’re primarily a hardware-oriented person, you're primarily a code-oriented person, or you’ve achieved some amount of fluency in both, making you “hard core enough for the scene.”

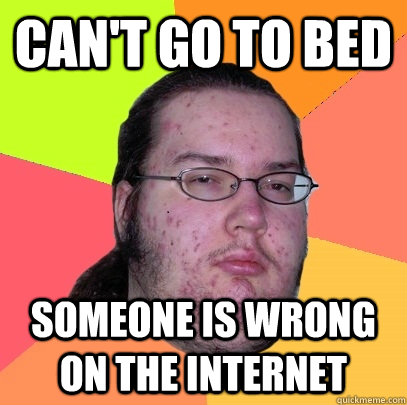

But every time I find myself browsing through a forum and the topic turns to code, it invariably erupts into a code-infused flame war between all the code-ly types. It really is worse than a debate over religion. And any aspect of code will set these people off: variable declaration, choice of libraries, structure, best practices, comments…and I think the biggest one, languages. “Your code doesn’t work? Wait, you’re using JavaScript? Well, there’s your problem right there. You should be working in Python! What about C++?” Then somebody pipes up saying, “Why would anyone ever use C++?” They then list their reasons for hating it. Nothing like an over-inflated sense of self-importance coupled with anonymity to bring out the best in people. Hooray, internet!

Now, all cards on the table, I tend to be more of a hardware person. I became an electrical engineer not just because I had an interest in electricity, but because I was bad at topics where there could be multiple lines of interpretation. Math and science seemed to be a good way to go, you know? I wanted to have something concrete under me in my professional life, something where I wouldn’t be at risk because I made an incorrect assessment of a task. Something where the rules of engagement were well-defined would suit me and my limited ability to understand human nuances quite well. And yet, even in circuit design there is room for creativity, but the laws of physics keep that creativity loosely bound. I would find my home in circuit design.

And as such, I generally hold the opinion that writing code is a necessary evil, at least where embedded (or otherwise) electronics are concerned. Because, let’s face it, there are some things that are just way easier to get done with a microcontroller. Right? How much time do you want to invest in any given project? My drive for an analog solution at all costs isn’t nearly enough for me to swear off writing a little code. But I’m definitely more at home with an iron in my hands rather than a keyboard under them. That said, I can still hack my way through with C well enough to get all my projects done. A datasheet and Google are usually enough reference. And I might peruse a forum for an answer, but I sure won’t post anything. Why? Because of all the vitriol I’ve witnessed on various online forums. Hey, I’m a snowflake, I’ll admit it. I can find my answers without subjecting myself to that kind of abuse.

But let’s look at this critically. Can it possibly be true that all primarily code-oriented people are just so territorial that you can’t have a civil online conversation about code with any of them? Without having to do any real soul searching, of course the answer is no. So why does it look to me as though so many more arguments come about over code than hardware?

First, let’s look at the numbers. Just go to Reddit and check out how many people subscribe to which subreddits. For example, Electrical Engineering has 32K+ subscribers (give or take; these numbers are a few months old). Electronics has 69K+ subscribers. But then you’ve got Python with 163K+ and JavaScript with 107K+, just to name a couple. But is this a good data set? Maybe this just isn’t where hardware people hang out. Heading over to Wikipedia, we find that the estimated number of EEs in the country is 183,770, while the number of software devs looks to be something north of half a million. That supports what Reddit is telling us. Now of course, these lines of division aren’t hard and fast, but I would suggest that they at least describe trends in the population. Generally, there are many more people working in coding positions than hardware design positions. And honestly, I’d be amazed if this surprised anyone.

So just by the numbers of people involved, you’re more likely to come upon a code-induced flame war than one based on hardware design. But what about the subject matter? Programming languages are human constructs, subject to lots of interpretation as to how to implement one best. I mean, it literally is a language, just like any spoken language. And it follows that as there are grammar nazis for English (or any other spoken language), there will be grammar nazis for Python, and they will be just as mouthy on the internet. Add to that some of the entrenched loyalties people maintain for their favorite language, and you’ve set the stage for some wild times.

On the other hand, you’ve got a science (meaning hardware design topics) that doesn’t change all that much over time, and most of the rules are known. Surely there’s less to argue about…? Well, just pop over to the diyAudio forums and have a look around. That should clear up any misconceptions about how there’s less arguing over hardware design. But while that may be the case, I have to think there’s still some truth in my statement that there should be less to argue about. Maybe?

Why are there more code-related flame wars than hardware? It’s got nothing to do with being a “code person” or a “hardware person.” These are just people who are passionate about their favorite topics and seem to enjoy being right and making everybody else deal with it in the forums. And there are more people actively involved in writing code than there are designing hardware, so likewise there are going to be more topics of conversation visible in the public sphere. Lastly, it’s a volatile topic because it’s a human construct. Nobody ever says, “I think Ohm’s law is stupid; why do you even use that?” If you’re designing hardware, you’re going to have to use Ohm’s law. But you've got a choice about the the code you write and the language you use. And you know what they say about opinions, right? Humans will be humans.

All of this can make advancing more difficult than it has to be. When explaining electronic concepts, I try really hard to make it clear that while some design choices may be better than others, there are still many paths to success. I’ve seen less of that sort of acceptance and encouragement in code circles, but I’m sure it must be out there somewhere. If you’re one of those who are always trying to take your game up a notch (and who among us isn’t?), whether it’s your code or your circuit chops, try to take the negative waves with a grain of salt and don’t reciprocate. Cultivate a beginner’s mind and maintain it through your professional life. And remember that, either by age or experience, you’ll be the expert one day.

Coders who work in the industry for a while probably bear the scars of ill conceived architecture, language and practice, have probably accepted blame, lost sleep, sanity and all but the tiniest shred of hope for software projects that were doomed. They have bought into bullshit like Object Oriented Programming ( allow me this one specific example ) and trusted piers, superiors, and the community at large to have their hearts broken by yet another death march. People argue about code because they care, both from a genuine love of the art as well as a primal instinct to avoid bad design choices which will inevitably come down on them. There are a lot of people putting their times and energies into figuring out problems that shouldn't be difficult, often fighting the very tools that promised to make things more reasonable. And when we do find a technology that is easy to reason about, and flexible and good not just because the college textbooks say so, then we want to shout it from the rooftops, we want to baptize our hapless helpless peers in the fire of new knowledge, proselytizing a new iconoclastic movement against the old, broken, and corrupt - because not only have we found new hope but a renewed sense of order and beauty, a clearer understanding of everything, because if we seek to model, mold and shape reality with the clever employment of our languages than what are we besides poets and philosophers and mages; and if our languages fail us what are we besides frauds.

so yeah, it gets to feel like a big deal sometimes.

That penultimate sentence could use some OO. :-)

As an embedded software engineer by trade, I am disheartened by your experience. I feel compelled to chime in with some encouragement.

I have found StackOverflow.com to be a quick, flame resistant, and typically top Google hit for most of my coding questions, especially for unusual Git situations (I use git command line and make mistakes). When questions are well written, the community is pretty good about suggesting solutions within the constructs of a specific language or development environment. They even actively discourage open ended opinion questions like "Which single board computer is best for my project?".

I'd also throw out that there is a significant "science" to coding, Computer Science. Perhaps one route to overcoming flame wars is to focus conversations on objective mathematical principles. As an "Ohm's law" like example, the "Big O" of an algorithm is constant regardless of the programming language.

I'm sorry, I didn't mean to imply that there's no science behind coding. There certainly is and I recognize that. But it's still different terrain because it's different science, and opinion-based commentary has an easier time of weaseling into the conversation.

I like the discouraging of open-ended opinion questions... mostly. I mean, we're humans, we gotta argue and discuss or life would be unbearably bland. But I like less of that when I need an answer.

To be honest, I find it all silly.

Coming from a Unix/Linux background of many years, we have the motto "Use the right tool for the job" or

"Write programs that do one thing and do it well. Write programs to work together. Write programs to handle text streams, because that is a universal interface."

I think code is like cars, we each like something different. Would be nice if we could all get along.

So while I can see how we all might have a different opinion about what to use, hopefully we can agree to work together.

I'm probably showing my age here, but it's only in the last decade or so that I had any choice in the hardware, language or operating system on anything that was thrown at me. Some I loved, some I hated. However, it did force me to be adaptable. My advice to anyone in this business - especially at the accelerated rates of change these days - is to be adaptable. Don't get tunnel vision on any specific pattern, methodology or language. A lot of times, a combination of all of them will help you build out a complex system that might not have otherwise been feasible. That's all I got, now get off my damn lawn :)

Sorry I am late to the party but I am going to chime in. If I need to write a device driver in Windows I use C (with maybe some assembly). Good luck doing it in anything else. If I want to do OpenCV on a Raspberry Pi to perform character recognition using KNN I would probably do it in Python because Numpy is easier to work with. If I was going to write the backend of a webserver accessing a DB I'd probably use PHP with MySQL.

Sometimes you can choose your language... other times there is little choice. People get upset over languages because sometimes it really does make a world of difference. Other times they are just being douchebags. The trick is to know the difference.

Pete,

Here's my thoughts.

I think most of the "code hatred" is really done on the side of people who deal with, or write in multiple languages at the same time. An example of this is an IT person having to build an automation script to monitor servers or applications on the network. They may be forced to use a language that may not the most adequate to use do to resource limitation, expertise limitation, or something else.

Overall, I think the issue is not of the hatred of the languages, it's more of socially awkward people have a hard time expressing their solution in a way that conveys, "Hey, your problem can be solved easily with this language people the people who came up with said language thought of that problem."

Why are we even talking about this? Find a subject on the Internet and you're going to find arguing about that subject. From cast iron pan groups on Facebook to how best to detail a car. It's what humans do. It's why we are special. And somehow, it works over the long haul to improve our lot.

This is why I'm a "hardware" guy: http://www.sandraandwoo.com/2015/12/24/0747-melodys-guide-to-programming-languages/

You know what is a nice dream? A nice, universal programming language that actually becomes universal. Not one that wants to be, but is only used by some people, and so it becomes yet another in a long list of languages to use. But, I doubt that will happen anytime soon, so the best thing for now is to be the best you can in one, and dabble to learn others as you go.

https://xkcd.com/927/

Except....

That hardware isn't physics, it's technology, and technology is a human construct just as much as programming languages are. And the rules are constantly changing. I remember when "physics" guaranteed that a 1 farad capacitor would have to be the size of a railroad car, that a single fiber optic cable could carry all the nation's phone traffic, that computers had to be the size of a building, that digital circuitry would never be reliable enough, small enough and cheap enough to use in aircraft, that a satellite ground station had to be the size of a house with an antenna to match, and on and on and on. Physics never dictated this, it was technology as driven by economics and human requirements.

And with great respect to you Pete, you've made the same mistake with your Ohm's Law analogy and possibly this whole article. Yes, Ohm's law is based on a fundamental physical law. In exactly the same way computer languages are based on fundamental mathematical laws, laws which have been through the review and proof process and are unarguable. For example, no one argues about Boolean algebra, just how it's implemented in a particular language. If you go to the fundamental bedrock supporting computing languages, the Turing Machine mentioned by 134773, there is no argument whatsoever. The arguments are about the implementations of languages that are all Turing complete.

So your hardware folks exemplars should be ones who argue about which chip is better, whether a unit should have an on/off switch, whether a touch screen should be curved or not, how thin a phone should be, whose phone has a better plan, even. If you expand your "hardware" population to where they're dealing with the same level of abstraction as the language combatants, I think you'll find just as many hissy fits.

Eh... I think you're splitting hairs, sorta. While the tech may change (the physical size of a cap, for example), the rules underlying it (how the cap functions) do not. To take the example further, if I've got a power supply and I'm going to draw x amount of current from it and I want less than y amount of ripple, I need a cap of value z, and that doesn't change.

But I get your point. I'm specifically looking at hardware design from a very low level where there is little to no abstraction (which I LOVE, cuz I hate abstractions) and comparing it to code design which is necessarily highly abstracted and saying "WTF?" And I don't think you're wrong when you say that at a similar level "hardware" people will argue, too. But for the record, I don't think anyone's innocent here. I know that hardware people argue. All people do, right up to the last point that a misunderstanding can be perpetrated. I just tend to run into fewer of those sorts of nerd brawls where I hang. Unless it's Hackaday.

I started out as a hardware guy, around 1968. I was bending code by 1973. I've worked on so many platforms, and used so many languages, that it just blurs. Getting religious about any of these, is more likely to reveal your insecurities about "the other side", (whatever that is), than to convince anybody that your solution is "the true solution". The more languages you master, the more you realize that they all have their strengths and weaknesses, and that none is a true panacea. Gee, shall we use an 80XXX processor, or hammer together a stack of AMD 2901 bit slice processors, and write our own instruction set? Who cares? Been there, done that, realized that hardware was becoming an off-the-shelf commodity around 1984, and moved fully into the software domain... And now I've come full circle, to find that playing with hardware is fun again, and writing the code that makes it sing is no big deal.

I have to laugh. Hardware people get along? Let me just post a pic of my arduino blinking an LED.

Your embedded C might look to me how my cold solder joints look to you.

Common denominator. People are a pain. All I need is I2R to keep me warm at night.

Lol Oh, man. The comments are definitely taking an interesting turn...

I tried to read this article, it sounded interesting. But then that Pirate animation thing. My head exploded. What a mess. Sorry, no comments.

Why can't politicians just get along? Why can't football fans just get along? Why can't neighbors just get along? Why can't coworkers just get along? Why can't extended family just get along? Why can't family just get along?

See a pattern? There is a known root cause and a solution. Let him who has ears, hear.