Academia has been talking about artificial intelligence for decades, and while the field has made amazing strides, there haven’t been many tangible effects for general users. With exciting new tools like TensorFlow for machine learning, and increasing processing power, we are starting to see improvements that we can begin to reap benefits from.

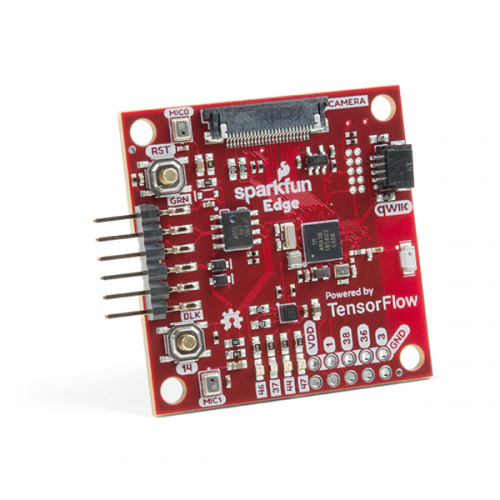

Introducing the SparkFun Edge!

I’m pleased to announce the SparkFun Edge. Powered by the Apollo3 from Ambiq, the SparkFun Edge allows us to finally poke around with edge computing and implement actual uses such as voice and image recognition.

But wait you say, I’ve got a voice thing in my home I use all the time. How’s this different?

The major difference is that no internet is required. All the processing is done on our board, at the edge of your project. Alexa, Siri and Google Home are always listening, and always uploading audio data to the web for analysis. There are obvious privacy concerns, and some not so obvious global warming concerns with these always-on, always-connected devices. The future is in lower-power, smaller, more specialized assistive devices with limited or no connection to the internet. This is where the SparkFun Edge shines. It cannot order you a dozen eggs from Whole Paycheck, but the SparkFun Edge can activate a blender or hot-water heater, or do anything your microcontroller can do when it hears a certain word, with nothing but a coin cell battery for power. How? I'm so glad you asked!

At the heart of the SparkFun Edge is the Apollo3, the latest Cortex M4 from Ambiq Micro. Consuming just 8uA per MHz, this processor is possibly the lowest power microcontroller on the planet. It's so low, in fact, that we’ve demonstrated voice recognition using nothing but a coin cell battery. Try that, Siri. Additionally, the Apollo3 has built in BLE, 50 GPIO, a MB of flash, 384k of SRAM, and runs at 96MHz using less than 1mA at full tilt. With power cycling, the Edge can perform for days or weeks on nothing but a 3V CR2032. With tools like TensorFlow Lite, the incredibly complex world of machine learning can now be squeezed onto the SparkFun Edge. We’re pretty excited.

We’ve been working with a team at Google to implement TensorFlow Lite for audio recognition, and have promising results. We’ve proven out the hardware including the microphones, accelerometer and camera interface, so we’re making the Edge available for pre-order today. We have documentation on how to set up and use the Ambiq SDK to implement the voice recognition demo, and hope to release image recognition examples soon. We’re also working on an Arduino port that should be available in the next few months. If you’re interested in playing with TensorFlow on embedded targets, or if you just want a wickedly powerful micro to replace your Uno, you should consider checking out the SparkFun Edge today.

Hey, Nate: (a) thanks for including the 32.768kHz XTAL! (b) How about adding the link to the Alpha3 Blue datasheet to the product page? advTHANKSance!

Good point! Listed now. It's here if you need it.

Awesome! Can't wait to get my hands on one of these. I'm continually impressed by Sparkfun's work to bring cutting edge tech to the hobbyist market. I can't wait to see what comes next; keep it up!

P.S. One product I'd love to get my hands on is an electrochromic display. Rdot has some amazing technology, but they won't sell to hobbyists such as myself. It'd be amazing if SparkX could make a breakout for one of those.

Great Work Nate!

Yeah, but does it include an RTCC (Real Time Clock Calendar)? I can think of some nifty projects if it does...

Short answer: it looks like the µC is built for low power operation, and has some nice RTC features.

Long answer: page 547 of the publicly available datasheet (PDF) for the Apollo3 Blue says:

Thanks again! I managed to find time to glance over (though not in great detail) the datasheet. It does generally look good (although I'd like to see the Apollo3 Blue have a separate power pin for the RTC module -- this allows for a "backup power supply" that keeps the clock going e.g. during battery changes) -- good enough to get me a bit excited about it, especially at the price point of $15!

Looking over the datasheet a little further finds that the current for the Apollo3 Blue is around (doing the math) 1.3mA, with a "deep sleep 2" current down around 1.4uA! (Assuming I'm reading that correctly, DS2 will keep the RTC running.). Hmm... if I'm doing my "mental math" correctly, 2 AA alkalines powering it will die of "old age" before they run out of power if it "wakes up" for say, 0.5sec once per hour...

Thanks! Too busy at the moment to look at the datasheet -- hopefully this weekend, but that sounds great!