I've been continuing to explore how to use the ESP32 Thing. In a previous Enginursday, I built an OLED Clock that uses the ESP32 to automatically get the time from the internet. This week I wanted to build a robot that you can control from your web browser using the arrow keys on your computer. For a full tutorial on how to make your own, stay tuned for a tutorial on our learn.sparkfun.com page, but for a brief overview continue reading below.

The Hardware

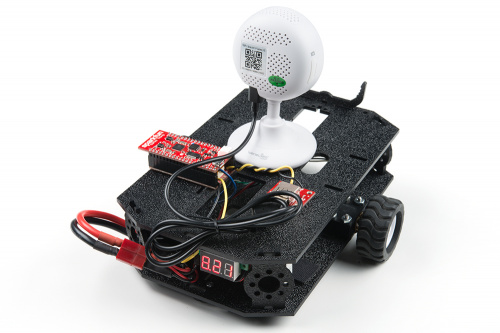

The robot is focused around our shadow chassis, and the motors are controlled with our Serial Controlled Motor Driver (SCMD). Some of the other parts used are a 2200mAh battery, a USB-A Female Breakout (to avoid having to hack apart the USB cable to provide power to the camera), and a 5V DC/DC Converter. In addition to the ESP32 Thing, I used our Motion Shield to store the HTML file that the ESP32 serves to client when they connect.

Having the HTML file on the SD card sped up the development time because this way I could make changes to the file without having to wait for Arduino to compile the code for the ESP32. The DC/DC converter is important because I needed to regulate the 6-8.4V battery voltage down to 5V to not only extend the battery life with the increased efficiency of a switching regulator, but also because the camera and ESP32 will draw 500mA of current, which would normally cause a linear regulator to get quite toasty.

The Software

Getting started with this project left one big question in my head, "Can the ESP32 handle a video stream?". And the answer I ended up with was: not sure, but it doesn't matter. Something I wasn't sure about was how the ESP32 would handle a link when a client connected to the web server. Would the ESP32 see the link to the camera's image stream and store the image in memory? How big of an image could I display before the ESP32 ran out of memory and crashed? Or is it up to the client to go to the image stream and create the image?

To find out the answer, I found a public IP camera and embedded the video stream into my HTML page. What I discovered was that it doesn't matter if it's an image or a video link – all the ESP32 does is send the HTML code to the browser, and it's up to the browser to figure out what needs to be displayed where. So when the browser saw a link, the browser went to the URL and retrieved the image or video.

For the ESP32 and HTML code, you can find it on my GitHub here. In the HTML code, I check for keyboard presses and releases (specifically the arrow keys). When a key is pressed, a text string is saved in a separate page using an XML request. When text is sent to the page, the ESP32 scans the request to see what action it should take. These could be to drive forward/backward or turn left/right.

The hard part was trying to embed the video stream into the HTML file. Each camera manufacturer has their own way to stream video. Some of the older cameras use a MJPEG from a HTTP link, but the newer cameras use what's called a Real Time Streaming Protocol (RTSP), instead of HTTP. An RTSP link isn't native to HTML5 and requires a Javascript library to convert the stream. To keep the file size smaller, I found that the camera can grab snapshots of the image from a HTTP link, and with significantly less code I was able to create a video stream by taking snapshots at a regular time interval to make it look like a video stream.

The Tutorial

Build your own!

WiFi Controlled Robot

May 2, 2018

This tutorial will show you how to make a robot that streams a webcam to a custom website that can be remotely controlled.

See the Robot in Action

When I was first planning out this post, I wanted to drive it around our office and record my screen to see how much fun it was to drive around, and look at all of the reactions of people as they stare at it driving past their department. As it traveled, it picked up few fashion accessories along its way:

But just watching me drive around isn't really all that fun. What would be more fun, is to let YOU drive the robot around. You can access the robot from ~~this link~~. Unfortunately, it only seems to work on Firefox and Safari. In order to view the IP camera stream you need to log into the camera, which can be embedded in the URL for the camera, but Chrome and Edge will block passing the credentials for security reasons. If you're asked for a user name and password, enter guest for the user name and password for the password. The camera is capable of 30FPS, but in real world conditions, the frame rate varies from ~12-25FPS. The controls are pretty simple:

Arrow Keys:

- Up - forward

- Down - backward

- Left - turn left

- Right - turn right

- Press and hold - continuous movement

- Single press - single step forward/backward and slight turn left/right

Ground Rules

First, I don't know what is going to happen. I don't know how many users can connect before the ESP32 crashes, or how many users can access the camera at any given time. Most importantly, be patient and share. I don't have anything fancy going on in the code to create a queue of users with a time limit on driving. If one person tells it to drive forward, and another then tells it to drive in reverse, it will execute the last command that was received. So try and let other people take turns driving it.

Second, you are confined to Engineering. We have barriers set up to keep the robot in my department, trying to drive over them will just get the robot stuck. I'm going to try and keep an eye on it, but once it gets stuck, it might be stuck for a while, so it might be best to try and steer clear of the barriers.

The page might go down occasionally. It might be that the battery is being replaced, or the ESP32 and/or camera have crashed due to the number of users. Give it a couple of minutes, and it should be back online. I'm only planning on having the robot online from 8:30am-5:00pm (MDT) on Thursday 3/22 and Friday 3/23.

And that's it! Have fun driving around our offices. If you want to drive by my office and say hi, my office is the door with a life sized (-ish) Han Solo vinyl sticker.

Too much fun! I really like the battery trap.... too funny! And absolute genius! Thanks for letting people play with it!

I have built it - I used a Pi Zero W + V2 camera as the webcam, and configured it to stream using the"motion" software package, although with motion detection turned off.

With no video, the keyboard control for the cambot worked very well. When I streamed video using the technique described in the blog and tutorial, the video worked great, but the response to the arrow keys became sluggish, and stop signals from key releases were almost always not picked up. I was using a Safari browser. Not yet sure how to make the keyboard action signals more robust.

Alex thanks again for allowing access to this awesome project. Got to share it with the grand kids late today. We parked it in your office in front of the desk at about 4:30pm your time. Oh, and thanks for waving again. I`'ve got to make one of these.

Heads up! The tutorial is now live!

https://learn.sparkfun.com/tutorials/wifi-controlled-robot

I'm glad you and the grand kids enjoyed it! Tutorial should be public in the next week or so.

Great project! How do you get around the cross domain thing in that the browser page points to the esp32 but the camera link seems to be another domain?

The "cambot.sparkfun.com:8082" URL is just a DNS mask we set up to make it easier to go to versus having to enter in an IP address. The camera and the ESP32 have the same IP, but use different ports (ESP32 is on port 8082 and the camera is on port 962).

Excellent value for money.

good post

FWIW, on the Black Friday - Cyber Monday sale in November I'd ordered KIT-14329, thinking to integrate it into a robot chasis I'd purchased years before, but then had a nasty medical issue arise before I was able to even assemble the KIT-14329. I was hoping to be able to "pan & tilt" the camera independantly from the robot motion. Maybe someday I'll get back to that project!

Anyway, sounds like a fun project, and somewhat simpler (and less expensive) than mine, as well as being more public! (I was thinking partly of helping to find stuff that the cat bats around and ends up under the furniture...)

FWIW, Firefox (58.0.2, running under OSX 10.13.3) gives me a "Secure Connection Failed" message that says:

The connection to cambot.sparkfun.com:8082 was interrupted while the page was loading.

I'm using 59.0.1 on Windows 10 without issues. Since you're on OSX try it from Safari.

Just tried Safari, and it won't even let me open www.sparkfun.com -- oh well...

A few seconds later, and a retry got me into www.sparkfun.com... got essentially the same result from cambot.sparkfun.com (slightly different wording)

By the way, I'm not upset by this -- I've got lots of other things I "should" be doing. I'm completely OK with just saying "oh well...", except that there may be others having the same problem but NOT following the "Please contact the website owners to inform them of this problem."

Something just occurred to me: the URL specifies port 8082, and that might not be making it through my firewall -- I've got it locked down pretty tight. In deed, it won't even accept an SSH from within the house, so I'd have to walk in the other room and log into it to check -- don't have time at the moment.

This is cool. I'll build one.

Very cool. Enjoyed wandering the space and seeing Alex wave hello briefly when I popped in. Should be able to wave back.